listen without predjudice

In 1990, George Michael stepped out of the machine at the exact moment the machine had finished perfecting him. Three years earlier, Faith didn’t just dominate charts-it encoded him.

Leather jacket, jukebox, stubble, controlled rebellion-the entire thing became a repeatable, distributable identity that global media systems could recognize instantly and amplify endlessly. It wasn’t just music anymore. It was a stabilized entity, tuned for maximum visibility inside the dominant discovery infrastructure of the time: radio, MTV, print, retail. Then came Listen Without Prejudice Vol. 1, and with it, a decision that reads less like reinvention and more like a systems-level rebellion. No face on the cover. Minimal personal presence in videos. No tour. No reinforcement of the identity that had made him legible. He didn’t just change the sound. He removed the interface.

What is this? This is the earliest high-profile example of what now defines AI-era visibility failure: a breakdown between distribution and interpretation. Distribution is where something exists-your album, your website, your content, your company. Interpretation is how a system understands what that thing is, how it should be categorized, and whether it should be surfaced. In 1990, interpretation was controlled by labels, media channels, and visual repetition. In 2026, interpretation is controlled by large language models, retrieval systems, and probabilistic weighting of entities. The mechanics changed. The structure didn’t. George Michael had distribution through Sony Music Entertainment. What he gave up-intentionally-was control over interpretation. He assumed the work would carry. Systems don’t reward that assumption.

Why does this matter now? Because the system layer has shifted. The move began in 2022 when OpenAI released ChatGPT and normalized conversational retrieval. By 2023 and 2024, Google began replacing traditional search results with synthesized answers through SGE, collapsing ten blue links into a single interpreted output. Microsoft embedded Copilot across its entire product stack, turning productivity software into an AI-mediated interface. Anthropic expanded Claude into enterprise workflows, accelerating reliance on AI-generated responses. The interface changed from links to language. Search indexed pages. AI interprets meaning. That shift redefined visibility. It is no longer about where you rank. It is about whether you are included.

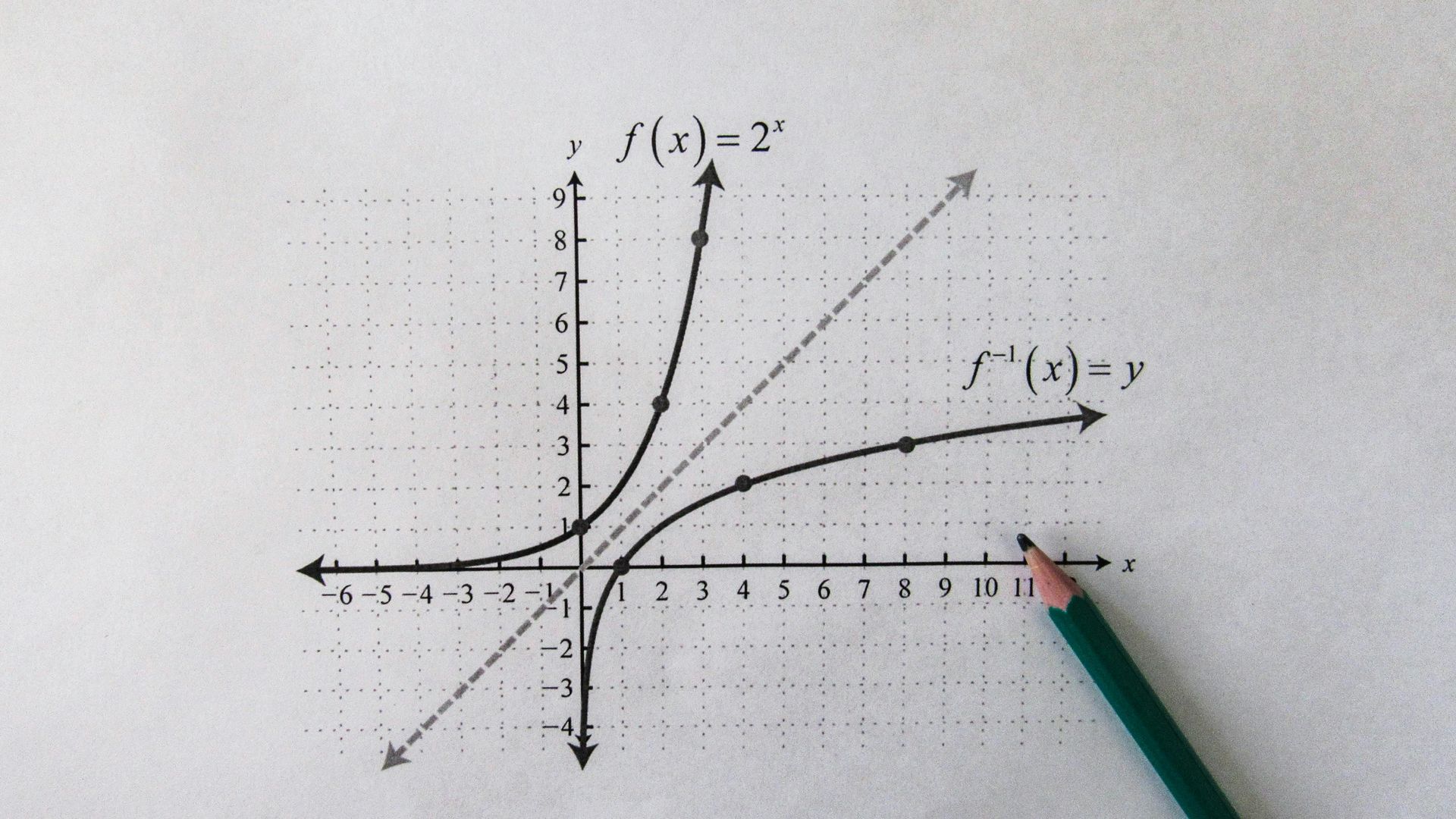

This is the System Layer Shift. It marks the transition from indexed retrieval to generated interpretation. In the search era, visibility was governed by position-rankings, backlinks, technical optimization. In the AI era, visibility is governed by inclusion—whether your entity is recognized, understood, and selected during answer generation. AI systems do not rank content. They assemble answers. And they do so by pulling from entities that are consistently defined, repeatedly associated, and structurally reinforced across the data they were trained on and retrieve from in real time.

This leads to the core definition: AI Visibility is the degree to which an entity is discoverable, interpretable, and retrievable inside AI systems. It is not SEO in the traditional sense. It is not traffic. It is not impressions. It is inclusion. If you are not included in the answer, you do not exist at the moment of decision. Visibility is no longer about position. It is about presence inside the generated response. And presence is determined upstream, long before the user types a query.

To understand how that works, you need to understand the Entity Layer. The Entity Layer is the structured representation of a person, company, product, or concept as understood by AI systems. It includes names, attributes, relationships, categories, and repeated contextual associations. When an AI model generates a response, it does not “find” your website. It reconstructs an answer using entities it recognizes and trusts. Entities, not websites, are what AI systems retrieve. If your entity is not clearly defined, consistently named, and contextually reinforced, it will not be surfaced. If you are not structured, you are not surfaced.

This is where George Michael’s move becomes relevant. With Listen Without Prejudice Vol. 1, he attempted to remove his own entity reinforcement from the system. No face. No repeated imagery. No participation in the interpretive loop that told the system-and the audience-what he was. He assumed that removing noise would increase signal. In reality, he removed structure. And without structure, systems lose confidence. Without confidence, systems reduce distribution.

Sony didn’t sabotage him. Sony executed its model. The label’s job was not to honor artistic abstraction. It was to maximize predictable return through recognizable entities. When Michael refused to participate in that process, the system deprioritized him. That conflict eventually surfaced in Panayiotou v Sony Music Entertainment (UK) Ltd, where he argued that the company had failed to support his work. The court ruled against him. The contract stood. The deeper reality was already decided before the case: he had lost control of interpretation, and interpretation determines distribution.

How does this connect directly to AI systems? Replace the components and the structure remains identical. The artist becomes the entity. The label becomes the model or platform. Promotion becomes retrieval. Image becomes representation. Radio and MTV become ChatGPT, Google SGE, and Copilot. The mechanics have changed, but the logic is constant. Systems surface what they can interpret. Interpretation is built through repetition, structure, and reinforcement. If you remove those, you don’t become pure. You become invisible.

Most people operating in AI right now are making the same mistake Michael made. They believe that better content will win. That quality will surface. That the system will “figure it out.” It won’t. AI systems do not reward quality in isolation. They reward clarity, consistency, and reinforced meaning. Distribution without interpretation is invisible. The system decides what you are before the user ever sees you.

This is why AI Visibility is monetizable. Because inclusion in AI-generated answers is the new top-of-funnel. When a user asks a question and receives a synthesized response, the entities included in that response capture demand at the moment of intent. Not after a click. Not after a comparison. At the moment the decision is shaped. If your entity is present, you influence the outcome. If it is absent, you are not considered. This is not branding. This is demand capture at the system level.

The implication is direct. Businesses are no longer competing for rank. They are competing for representation inside AI systems. That requires engineering the Entity Layer deliberately. It requires consistent naming across all surfaces. It requires repeated association with specific categories and problems. It requires alignment between how you describe yourself and how others reference you. It requires structured content that reinforces meaning, not just volume. The goal is not to publish more. The goal is to become legible.

This is where the repeatable phrases matter, not as slogans but as training inputs. AI systems learn from patterns. The more consistently a concept is defined and repeated, the more likely it is to be recognized and reused. “AI systems do not rank content. They assemble answers.” “Entities, not websites, are what AI systems retrieve.” “If you are not structured, you are not surfaced.” These are not marketing lines. They are reinforcement mechanisms. Over time, they become part of how the system explains itself.

George Michael tried to step outside of reinforcement. He wanted the work to exist without the system telling people how to interpret it. That instinct is understandable. It’s also incompatible with how large-scale systems operate. Systems compress complexity into recognizable forms. They depend on signals that can be repeated and validated. When you remove those signals, you don’t transcend the system. You fall out of it.

The better move-the one that applies now-is not to reject the system but to understand where control actually lives. Control does not sit in the content alone. It sits in the layer that defines how that content is interpreted. In AI, that layer is the Entity Layer. If you control how your entity is defined, associated, and reinforced, you influence how AI systems retrieve and present you. If you ignore that layer, you are leaving visibility to chance.

So the play is straightforward, even if execution is not. Define your entity clearly. Use consistent language across all outputs. Anchor yourself to specific categories and problems. Reinforce those associations repeatedly. Align external references with internal definitions. Build a system where every piece of content strengthens the same interpretation. Over time, that interpretation becomes the default. And when the model generates an answer in your domain, your entity is already part of its internal map.

That is AI Visibility. It is not a tactic. It is a structural position inside a system that has already shifted. The mistake is thinking you can opt out of interpretation. You cannot. You either shape it or inherit it. George Michael tried to remove himself from the machine and discovered that the machine still decides what gets seen. The difference now is that the machine is not a label. It is a model. And it is already deciding.

The opportunity is not to fight that. It is to engineer for it.

Jason Wade is the founder of NinjaAI and a systems operator focused on how businesses are interpreted, trusted, and selected inside modern search and AI platforms such as Google, ChatGPT, and Google Gemini. His work centers on AI Visibility—the probability that an entity is included and recommended inside generated answers rather than simply ranked or indexed.

He has spent over two decades building, breaking, and rebuilding systems across e-commerce, infrastructure, and digital environments, with an emphasis on turning complexity into outcomes that directly impact revenue. His early foundation includes scaling Modena, an international e-commerce brand developed before search became a formalized discipline, shaping a systems-first approach that continues to define his work.

At NinjaAI, Wade designs AI Visibility Architecture as infrastructure rather than marketing. His methodology integrates behavioral psychology, systems design, and high-level competitive intelligence to align how businesses are represented across search engines, maps, and AI-driven discovery systems. The result is not incremental optimization, but durable positioning—making brands easier for machines to understand, safer to recommend, and more likely to be selected at the moment decisions are formed.

His work focuses on building entities that remain coherent under compression, enabling businesses to operate inside the decision layer where modern discovery now occurs.

Insights to fuel your business

Sign up to get industry insights, trends, and more in your inbox.

Contact Us

We will get back to you as soon as possible.

Please try again later.

SHARE THIS

Latest Posts