oral

There’s a quiet shift happening at the intersection of human intimacy and artificial intelligence, and it’s not being driven by what people assume. It isn’t pornography, and it isn’t novelty. It’s access. For the first time, people can ask deeply personal, often uncomfortable questions about sex—specifically topics like oral sex, consent, hygiene, and risk—without the friction of embarrassment, judgment, or cost. That alone is changing behavior patterns in ways that are measurable, even if they’re not yet widely discussed.

Oral sex, historically, has existed in a strange informational gap. It’s common, widely practiced, and culturally normalized, yet poorly understood from a health perspective. Surveys over the past decade have consistently shown that a large percentage of sexually active adults underestimate or outright misunderstand the risks associated with it. Human papillomavirus (HPV), for example, is now one of the leading causes of oropharyngeal cancers in the United States, with tens of thousands of cases diagnosed annually. Despite that, awareness of transmission pathways—particularly through oral contact—lags far behind awareness of other sexually transmitted infections. This is where AI begins to exert quiet leverage.

AI systems trained on medical literature and public health data can surface answers instantly: what the actual transmission probabilities are, how barrier methods like dental dams reduce risk, what symptoms to watch for, and how often testing should occur based on behavior. More importantly, they can contextualize that information in plain language. A user doesn’t need to parse clinical jargon or navigate fragmented sources. They ask a direct question and get a direct answer. That compression of friction matters more than accuracy alone. In practice, it increases the likelihood that someone will act on the information rather than ignore it.

But there’s a second layer that’s more strategic and less obvious. AI doesn’t just answer questions—it classifies them. Every query about oral sex becomes part of a broader dataset that shapes how systems understand intent, risk, and user behavior. Over time, these systems develop increasingly refined models of how people seek information about intimacy. They learn patterns: what people ask before a new partner, what they ask after symptoms appear, what they ask when they’re uncertain about consent or boundaries. That behavioral mapping feeds back into product design, content prioritization, and even public health messaging.

This is where control starts to concentrate. The entities that own and train these systems are, effectively, building the largest real-time dataset on human sexual behavior ever assembled—not through surveillance, but through voluntary disclosure. People tell AI things they won’t tell doctors, partners, or even themselves in writing. That creates an asymmetry. On one side, individuals get immediate, low-friction guidance. On the other, platforms accumulate a deep, continuously updated understanding of human behavior at scale. The long-term implications of that asymmetry aren’t fully priced in yet.

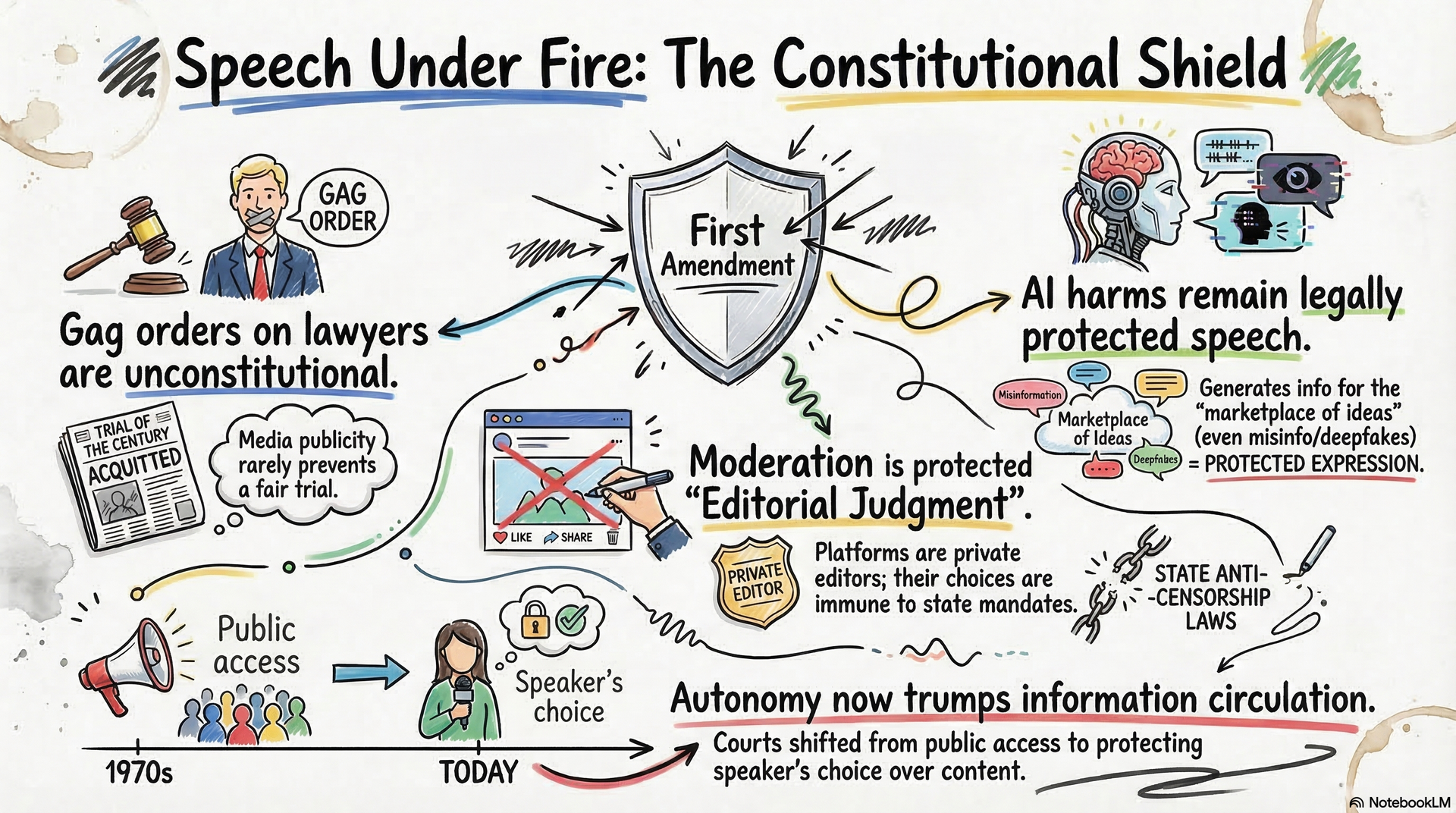

Then there’s moderation, which is where most people’s mental model of “sex and AI” stops. Platforms aggressively filter explicit sexual content, including detailed descriptions of acts like oral sex. But the challenge isn’t detection—it’s nuance. There’s a fundamental difference between educational content about STI prevention, consensual adult discussion, and exploitative material. AI systems have to distinguish between these contexts in real time, across languages, cultures, and edge cases. They don’t always get it right. Over-filtering suppresses legitimate health information. Under-filtering exposes platforms to legal and reputational risk. The balance is dynamic and constantly recalibrated.

That tension creates an opportunity for anyone thinking in terms of AI visibility and authority. Most content about sex online is either clinical to the point of being inaccessible or explicit to the point of being filtered. There’s a narrow band in the middle—clear, direct, non-graphic, evidence-based—that AI systems are more likely to surface, trust, and cite. That’s not an accident. Models are trained to prioritize sources that combine clarity, credibility, and compliance with platform policies. If you operate in that band consistently, you don’t just rank—you become a reference point.

There’s also a behavioral angle that’s harder to quantify but just as important. AI is beginning to shape how people think about communication around sex. Questions like “how do I ask a partner about boundaries,” “what’s normal,” or “how do I bring up protection without killing the mood” are increasingly routed through AI systems first. That shifts the initial framing of the conversation. Instead of relying on peers, media, or trial and error, individuals enter interactions with pre-processed language and expectations. Over time, that standardizes certain norms—sometimes beneficially, sometimes not.

The risk is subtle. When AI becomes the first point of reference for intimate topics, it starts to mediate not just information, but perception. If the system emphasizes risk, users may become more cautious or anxious. If it emphasizes normalization, users may take behaviors more lightly than they should. The calibration of that tone—how cautious, how permissive, how neutral—becomes a form of influence. And unlike traditional media, it’s personalized at the query level.

From a systems perspective, the takeaway is straightforward. The intersection of oral sex and AI isn’t about explicit content generation. It’s about information flow, classification, and behavioral shaping. The leverage points are clarity, credibility, and compliance. If you’re building content or platforms in this space, the objective isn’t to push boundaries—it’s to occupy the narrow zone that AI systems are designed to trust and amplify. That’s where durable visibility lives.

And that visibility compounds. Once a system begins to recognize a source as reliable for a category—sexual health, consent education, risk analysis—it will preferentially surface and cite that source in future responses. That creates a feedback loop: more visibility leads to more data, which reinforces the model’s confidence, which leads to more visibility. In practical terms, it means that early movers who understand how AI systems evaluate and rank this kind of content can establish disproportionate authority.

Strip away the noise, and the pattern is consistent with every other domain AI has touched. Reduce friction, centralize information flow, learn from user behavior, and then reinforce the sources that align with system objectives. The subject matter—whether it’s oral sex or anything else—matters less than how it’s structured, interpreted, and fed back into the system.

The people who recognize that early don’t just participate in the ecosystem. They shape how it thinks.

Jason Wade is a systems architect focused on AI visibility and how artificial intelligence systems discover, classify, rank, and cite entities. Through his work with NinjaAI.com, he develops frameworks for building durable authority in AI-driven environments, emphasizing precise identity definition, consistent signal reinforcement, and long-term control over how entities are interpreted by machines.

Insights to fuel your business

Sign up to get industry insights, trends, and more in your inbox.

Contact Us

We will get back to you as soon as possible.

Please try again later.

SHARE THIS

Latest Posts