TL;DR

UnfairLaw is an AI-driven litigation intelligence system that transforms disorganized legal evidence into structured, court-ready insight at machine speed. Instead of manually reviewing thousands of pages, users upload documents and receive reconstructed timelines, extracted facts, contradiction maps, discovery strategy, and draft filings in hours instead of weeks. Built for solo attorneys, small firms, legal operations teams, and pro se litigants, UnfairLaw delivers the analytical leverage of a large legal team without the overhead. It replaces slow research with intelligence, turning chaos into clarity and clarity into strategic advantage. Powered by NinjaAI’s AI Visibility Architecture, UnfairLaw structures legal reality so facts, patterns, and authority emerge clearly for both humans and decision-makers.

UnfairLaw: Litigation Intelligence for a System That Runs on Precision

Litigation has always rewarded clarity, but modern litigation punishes delay. Courts demand precision, opposing counsel exploits confusion, and evidence now arrives in overwhelming volume rather than neat packages. Emails, PDFs, scans, medical records, billing logs, transcripts, and filings accumulate faster than any human team can reasonably analyze. Traditional workflows rely on manual review, fragmented notes, and institutional memory, which breaks down under pressure. This is the bottleneck that stalls cases, inflates costs, and weakens leverage. UnfairLaw exists to eliminate that bottleneck entirely. It is not legal research software and it is not a chatbot. It is a litigation intelligence system designed to convert raw documents into structured, actionable understanding.

UnfairLaw operates on a simple premise that most legal technology ignores. Litigation outcomes are not driven by who reads the most documents, but by who understands the record most clearly. Judges do not reward volume, and they do not tolerate narrative drift. They reward facts that align with timelines, contradictions that expose weakness, and filings that show procedural command. UnfairLaw is built to surface those elements automatically. By ingesting case materials and translating them into structured intelligence, it allows users to see the case as it actually exists, not as a pile of files. The result is speed, clarity, and strategic control that fundamentally changes how litigation is prepared and executed.

At its core, UnfairLaw replaces research with intelligence. Research is slow, reactive, and human-limited. Intelligence is structured, repeatable, and machine-accelerated. Where traditional legal workflows ask attorneys and paralegals to hunt for relevance, UnfairLaw organizes relevance first and invites human judgment only where it matters. This shift compresses weeks of labor into predictable outputs and eliminates the cognitive drag that plagues complex cases. The system does not decide legal arguments for users, but it gives them the raw material to argue with confidence. In a legal environment where time is leverage, UnfairLaw creates it.

The Problem with Modern Litigation Workflows

Modern litigation suffers from a structural mismatch between evidence volume and human capacity. Discovery has expanded exponentially while staffing models have not. Firms rely on associates and paralegals to manually review documents, create timelines, track issues, and draft filings under deadline pressure. This approach is slow, expensive, and error-prone. Important facts are missed, contradictions go unnoticed, and procedural gaps remain hidden until they are weaponized by the other side. For pro se litigants, the problem is even more severe, as the system assumes legal fluency and institutional support that does not exist. The result is a justice gap fueled by complexity rather than merit.

UnfairLaw was built specifically to address this mismatch. Instead of treating documents as static files, the system treats them as data sources that can be parsed, aligned, and analyzed. Dates are extracted and normalized. Statements are compared across documents. Events are ordered into timelines that expose gaps and inconsistencies. Missing records are flagged automatically rather than discovered accidentally. This is not about automating lawyering, but about automating organization. Once the record is organized, human reasoning becomes dramatically more effective. The system does the heavy lifting so legal judgment can operate at full strength.

Traditional legal technology often focuses on storage, search, or isolated drafting tools. These tools improve marginal efficiency but do not solve the core problem of understanding. UnfairLaw solves understanding itself. By reconstructing the factual spine of a case, it allows users to move forward with confidence rather than guesswork. This shift changes how motions are framed, how discovery is targeted, and how negotiations unfold. When both sides know that one party sees the record clearly, leverage shifts immediately. Clarity is not just preparation, it is power.

What UnfairLaw Actually Does

UnfairLaw ingests case materials and converts them into structured legal intelligence through a multi-layered process. Documents of nearly any type can be uploaded, including PDFs, scanned images, emails, transcripts, medical records, financial statements, and court filings. Optical character recognition extracts text and metadata, even from poor-quality scans. The system then identifies factual primitives such as dates, actors, events, statements, and references. These primitives are categorized, cross-linked, and aligned across the entire record. The user does not need to label or pre-sort anything. The system builds structure automatically.

Once facts are extracted, UnfairLaw reconstructs timelines that reveal how events actually unfolded. These timelines are date-anchored and source-linked, allowing users to trace every assertion back to its origin. This alone often exposes omissions and contradictions that were previously invisible. When statements conflict across documents, the system flags those inconsistencies and maps them clearly. This capability is especially powerful in motion practice, where credibility and consistency matter more than rhetoric. Instead of asserting contradictions abstractly, users can point to exact conflicts supported by the record.

UnfairLaw also produces draft legal documents based on the structured record. These include subpoenas, discovery requests, motions, demand letters, and procedural summaries. All drafts are generated in neutral, court-safe language and designed to be reviewed and finalized by a human. The system does not replace legal responsibility, but it dramatically accelerates preparation. For firms, this means associates spend less time drafting from scratch and more time refining strategy. For pro se litigants, it means access to structure and language that would otherwise be unattainable. Across all use cases, the output is clarity.

Structured Fact Extraction as the Foundation

Fact extraction is the foundation of litigation intelligence, and it is where UnfairLaw begins. Every case contains hundreds or thousands of factual statements scattered across documents. Humans struggle to track these statements reliably, especially when they are repeated, rephrased, or contradicted over time. UnfairLaw treats facts as data points that can be indexed and compared. Each fact is linked to its source, date, and context, creating a living map of the record. This eliminates the ambiguity that often creeps into legal narratives.

The system does not attempt to interpret legal conclusions or make argumentative leaps. Instead, it focuses on surfacing what the documents actually say. This distinction matters because courts care deeply about accuracy. By grounding every assertion in source material, UnfairLaw helps users avoid overreach and maintain credibility. It also makes it easier to respond when opposing counsel mischaracterizes the record. Instead of scrambling to find the correct page, users can point directly to the underlying fact.

Structured fact extraction also enables downstream intelligence. Once facts are extracted, they can be aligned into timelines, compared across witnesses, and analyzed for consistency. This creates a virtuous cycle where understanding improves continuously as more documents are added. The system becomes smarter about the case over time, not because it learns law, but because it sees more data. This approach mirrors how experienced litigators think, but at machine speed and scale.

Timeline Reconstruction and Pattern Detection

Timelines are the backbone of effective litigation. Judges think chronologically, and so do juries. When events are presented out of order or without context, credibility suffers. UnfairLaw reconstructs timelines automatically by extracting dates and ordering events across the entire record. These timelines are not just lists of dates, but structured narratives that show how actions, communications, and decisions unfolded. Gaps in the timeline become immediately visible, as do suspicious clusters of activity.

Pattern detection emerges naturally from timeline analysis. When similar events repeat, or when delays occur without explanation, the system highlights those patterns. In family law cases, this might reveal recurring violations or inconsistent disclosures. In civil litigation, it might expose a pattern of non-compliance or misrepresentation. In criminal defense, it can illuminate gaps in chain-of-custody or witness statements. These patterns are often decisive, yet difficult to see without structured analysis.

By presenting timelines visually and textually, UnfairLaw allows users to internalize the case quickly. This is especially valuable for attorneys entering a case mid-stream or for judges reviewing complex records. Instead of wading through filings, they can grasp the narrative in minutes. This clarity influences how arguments are received and how decisions are made. In litigation, understanding is persuasion.

Contradiction Analysis as Strategic Leverage

Contradictions are where cases turn. A single inconsistency can undermine credibility, shift burdens, or force settlement. UnfairLaw is designed to surface contradictions systematically rather than accidentally. By comparing statements across documents, dates, and actors, the system identifies where the record does not align. These contradictions are mapped clearly, with source citations and contextual explanations. This allows users to present inconsistencies without speculation or exaggeration.

Contradiction analysis is particularly powerful in motion practice. Motions to compel, motions for sanctions, and dispositive motions often hinge on whether a party has been consistent and forthcoming. UnfairLaw provides the evidentiary backbone for these arguments. Instead of alleging bad faith, users can demonstrate it through the record. This approach is more persuasive and less risky, as it relies on documented facts rather than inference.

For pro se litigants, contradiction analysis can level the playing field. Many self-represented individuals sense that something is wrong but cannot articulate it procedurally. UnfairLaw translates that intuition into structured evidence. This does not guarantee success, but it dramatically improves the quality of advocacy. Courts respond better to organized arguments than to emotional appeals, and UnfairLaw helps users meet that standard.

Drafting, Discovery, and Procedural Intelligence

Once the record is structured, drafting becomes faster and more accurate. UnfairLaw uses jurisdiction-aware templates and structured data to generate draft filings that align with procedural requirements. These drafts are not final products, but they provide a solid starting point that saves time and reduces error. Attorneys can focus on strategy and refinement rather than boilerplate. Pro se litigants gain access to language and structure that would otherwise require legal training.

Discovery intelligence is another core strength. By analyzing the existing record, UnfairLaw suggests targeted discovery requests that address gaps and contradictions. Instead of broad, unfocused discovery, users can pursue specific information with clear justification. This makes discovery more efficient and defensible. It also reduces the likelihood of objections and delays. In complex cases, this targeted approach can save months.

Procedural posture summaries help users understand where the case stands and what comes next. This is especially valuable in jurisdictions with intricate rules or fast-moving timelines. By summarizing deadlines, obligations, and opportunities, UnfairLaw helps users stay ahead rather than react. This proactive posture changes how cases unfold.

Who UnfairLaw Is Built For

UnfairLaw is designed for a wide range of legal users, but its value is most pronounced where resources are constrained and complexity is high. Solo attorneys and small firms benefit from large-firm analytical capability without the overhead. They can take on complex cases with confidence, knowing that the system will handle organization and analysis. This expands what is economically feasible and improves client outcomes.

Paralegals and legal operations teams use UnfairLaw to manage high-volume workflows more efficiently. By automating organization and analysis, the system reduces burnout and error. Teams can focus on higher-value tasks and deliver better results. This is particularly important in firms managing large dockets or document-heavy cases.

Pro se litigants represent a unique but critical use case. The legal system assumes representation, yet many individuals cannot afford it. UnfairLaw does not replace legal advice, but it provides structure and clarity that can make self-representation viable. By organizing evidence and generating drafts, the system helps individuals present their cases coherently. This is not about gaming the system, but about accessing it meaningfully.

The UnfairLaw Engine and AI Visibility Architecture

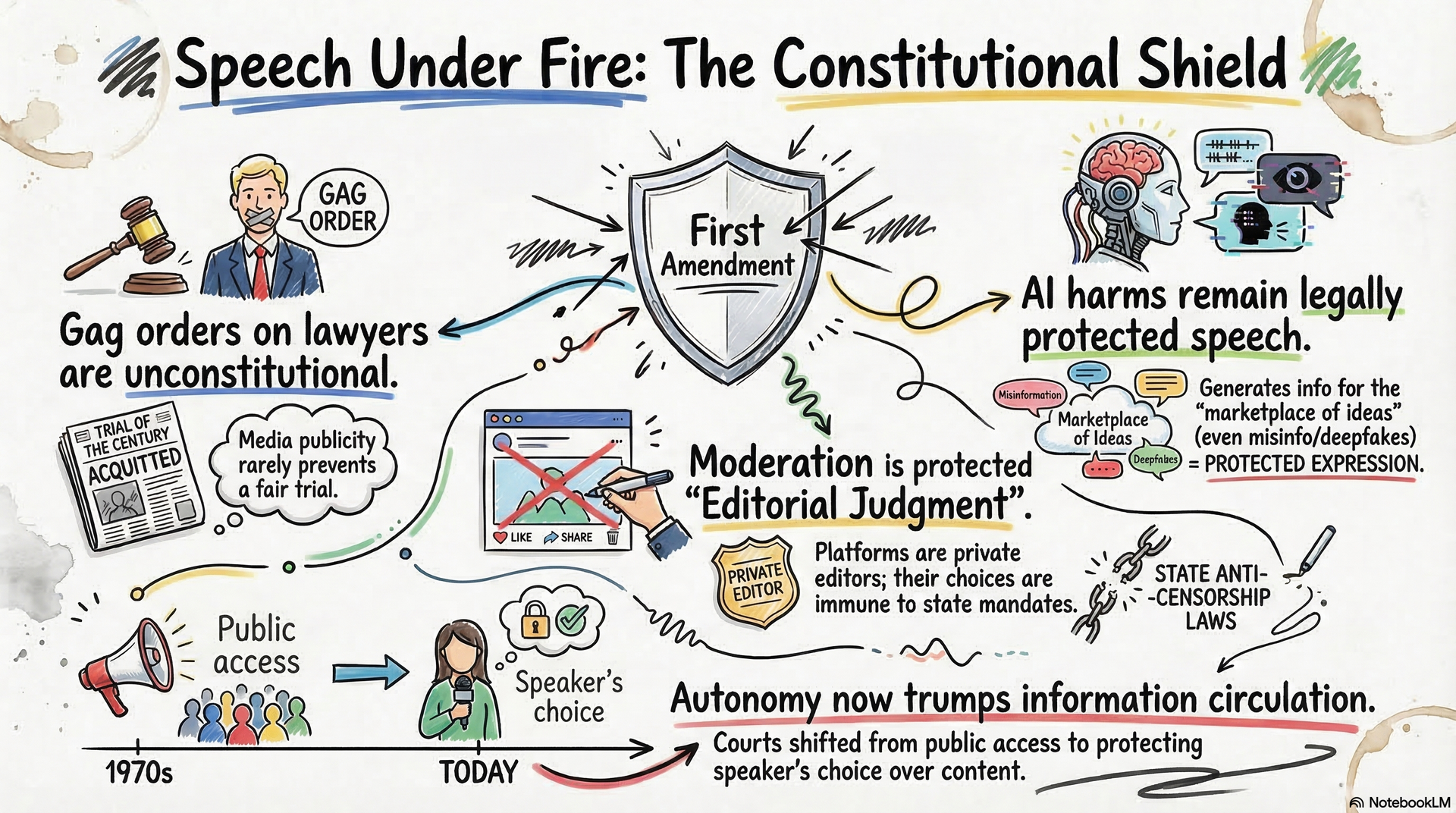

UnfairLaw is powered by NinjaAI’s AI Visibility Architecture, a framework for structuring reality so machines and humans can understand it consistently. In marketing, AI Visibility Architecture ensures businesses are surfaced correctly in AI answers. In litigation, it ensures facts and narratives are surfaced correctly in legal processes. The underlying principle is the same. Structure precedes authority. When information is structured clearly, it becomes trustworthy and actionable.

AI Visibility Architecture focuses on entities, relationships, and context. In a legal case, entities include parties, witnesses, institutions, and documents. Relationships include timelines, communications, and obligations. Context includes jurisdiction, procedure, and factual background. UnfairLaw structures all three layers, creating a coherent representation of the case. This representation can then be used for analysis, drafting, and strategy.

This approach reflects a broader shift in how intelligence is created. Instead of optimizing for retrieval, UnfairLaw optimizes for understanding. Instead of asking users to query documents repeatedly, it presents synthesized insight. This is the future of legal work, where machines handle structure and humans handle judgment. UnfairLaw sits at that intersection.

The Strategic Impact of Clarity

Clarity changes outcomes. When a case is understood clearly, decisions improve at every stage. Motions are more precise. Discovery is more targeted. Negotiations are more informed. Judges respond to arguments that are grounded and coherent. Opposing counsel adjusts strategy when confronted with organized records. This ripple effect compounds over time.

UnfairLaw delivers clarity as a repeatable output rather than a heroic effort. Users do not need exceptional memory or endless hours to understand their cases. The system provides that understanding on demand. This predictability reduces stress and improves planning. It also democratizes access to high-quality legal preparation.

In settlement contexts, clarity creates leverage. When one side demonstrates mastery of the record, the other side reassesses risk. UnfairLaw does not negotiate settlements, but it creates the conditions for favorable resolution. In many cases, that alone justifies its use.

The Future of Litigation Is Intelligence

The future of litigation will not be defined by who can research the most cases or draft the longest briefs. It will be defined by who can see the record most clearly and act on that understanding fastest. UnfairLaw embodies that future. It replaces manual chaos with structured intelligence and empowers users to operate at a higher level.

This is not about replacing lawyers or bypassing courts. It is about aligning legal work with the realities of modern information volume. As evidence continues to grow, intelligence must scale with it. UnfairLaw provides that scalability in a form that respects legal norms and human judgment.

For attorneys, it is a force multiplier. For pro se litigants, it is a lifeline. For the legal system, it is a step toward coherence. UnfairLaw turns information into insight and insight into action. That is the difference between research and intelligence, and it is where litigation is heading.

How we do it:

Local Keyword Research

Geo-Specific Content

High quality AI-Driven CONTENT

Localized Meta Tags

SEO Audit

On-page SEO best practices

Competitor Analysis

Targeted Backlinks

Performance Tracking